TOON : The Token Efficient Data Format Built for the AI Era

Introduction: A New Era in Data Formats for AI

Think back to the early days of the web. Developers were buried under XML – endless angle brackets, noisy structure, and documents that were barely readable. Then JSON arrived. It didn’t just clean things up; it changed the way the web communicated. Lighter, cleaner, and instantly easier to work with.

The Shift from XML to JSON

Fast forward to today, and we’re facing a similar challenge in the AI space. The conversation is no longer just machines talking to machines – it’s humans and AI exchanging structured data at massive scale.

The New Bottleneck: Token Costs

And suddenly, we’re hitting a new bottleneck:

Every. Single. Token. Costs.As datasets expand and LLM-powered systems scale, every token becomes real money and real compute. That’s when you start asking: Do we really need all this syntactic noise just to send data?

The Solution: TOON Token-Oriented Object Notation

That exact question led to the creation of TOON Token Oriented Object Notation – a compact, token-efficient format designed specifically for the AI era.

What is Toon ?

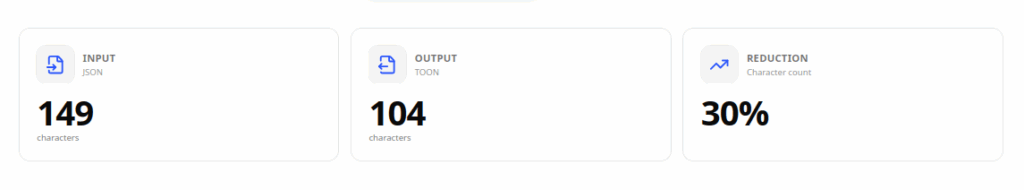

TOON stands for Token-Oriented Object Notation. It’s a compact, human-readable format that represents the same data as JSON but uses significantly fewer tokens in the process.

TOON is a new data serialization format designed with a code objective:

Reduce the number of tokens when exchanging structured data with language models.While JSON uses verbose syntax with braces, quotes, and commas, TOON relies on a token-efficient tabular style, which is much closer to how LLMs naturally understand structured data.

LLMs process text as tokens, meaning you pay for every token sent and received. With large datasets or frequent API calls, these tokens can quickly accumulate, increasing both cost and processing time.

TOON tackles this problem head-on by combining:

- YAML’s clean indentation for nested objects

- CSV’s tabular efficiency for arrays of similar items

The result A format that’s easy to read, structurally sound, and remarkably token-efficient.

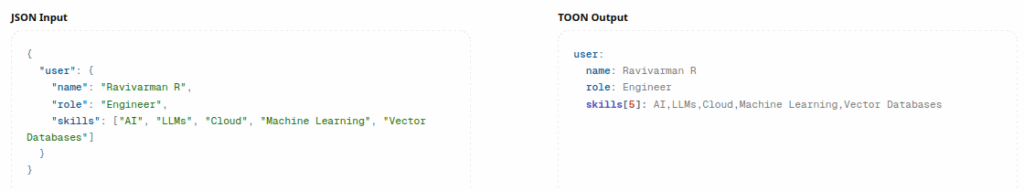

Seeing is Believing: JSON vs TOON

For instance, let’s look at a real example. Here’s some user data in traditional JSON

{

"user": {

"name": "Ravivarman R",

"role": "Engineer",

"skills": ["AI", "LLMs", "Cloud", "Machine Learning", "Vector Databases"]

}

}Now, the same data in TOON:

user:

name: Ravivarman R

role: Engineer

skills[5]: AI,LLMs,Cloud,Machine Learning,Vector DatabasesNotice the difference?

- No curly braces cluttering the view

- No quotation marks around every key and value

- No excessive commas

- Just clean, readable data

The token savings? Typically 25-50%, depending on your data structure. For applications making thousands of LLM calls daily, this translates to real cost savings and faster response times.

Why TOON Matters Right Now

LLMs like GPT, Claude, and Gemini run entirely on tokens. Every repeated field name, every opening brace {} , every comma , … it all adds up. If you’ve ever passed a large JSON payload a product catalog, order list, or user database you’ve definitely felt that pain.

TOON addresses that directly. It keeps the structure, drops the clutter, and makes the data easier for the model to understand.

That means:

- 25–50% fewer tokens for large or repetitive datasets

- Cleaner structure for LLMs to parse

- Full nesting support, like JSON

- Compatibility with Python, Go, Rust, JavaScript

TOON doesn’t try to replace JSON. It simply steps in where JSON becomes too heavy especially in AI workflows where every token counts.

Using TOON in Python

Getting started is incredibly simple.

Installation

First, install the package:

pip install python-toonOr if you’re using a virtual environment with uv:

uv init

uv venv

source .venv/bin/activate

uv add python-toonEncoding JSON to TOON

Converting your JSON data to TOON is a one-liner:

from toon import encode

data = {

"Employee": {

"Name": "Ravivarman R",

"Role": "Engineer",

"Skills": ["Machine Learning", "AI", "LLM", "Vector Database"]

}

}

toon_data = encode(data)

print(toon_data)Output:

Employee:

Name: Ravivarman R

Role: Engineer

Skills[4]: Machine Learning, AI, LLM, Vector DatabaseDecoding TOON Back to JSON

Need to convert back? Just as easy:

from toon import decode

toon_string = """

Employee:

Name: Ravivarman R

Role: Engineer

Skills[4]: Machine Learning, AI, LLM, Vector Database

"""

json_data = decode(toon_string)

print(json_data)Output:

{

"Employee": {

"Name": "Ravivarman R",

"Role": "Engineer",

"Skills": ["Machine Learning", "AI", "LLM", "Vector Database"]

}

}When Should You Use TOON?

TOON is not a universal replacement for JSON. It shines in specific scenarios, particularly when working with LLMs and AI workflows.

Great for:

- Sending structured data to LLMs

- Large arrays with similar structure

- Agent frameworks that depend on token efficiency

- Preparing training datasets

- Serverless AI apps where latency and cost matter

Stick to JSON when:

- Data is deeply nested

- Every object is unique and irregular

- Schema validation is required

- You’re building traditional APIs

Best of both worlds? Use both.

Store and serve data as JSON convert to TOON only when talking to LLMs. Simple and smart.

Will TOON Become the “JSON for AI”?

It’s too early to claim that. But TOON is gaining traction quickly. Developers want something lightweight, readable, and cheap to send to models and TOON hits that sweet spot.

Whether it becomes the next standard depends on adoption, tooling, and community support. But the early signs are promising.

Conclusion: Should You Start Using TOON?

If you’re building anything AI-driven, the answer is almost always yes.

You don’t have to replace JSON. Just try converting one structured LLM prompt into TOON and check the token usage yourself. If it saves you tokens and it will you’ll know exactly where TOON fits into your workflow.

Next time you prepare a payload for an LLM, ask yourself:

“Would TOON make this cheaper and faster?”

Most of the time, it absolutely will.

Before We Wrap Up…

That’s a wrap! I hope you found this article helpful and informative.

Make sure to follow us on LinkedIn for daily tips and insights to help you level up your skills.

References