In real-time data engineering, the first step of any pipeline is data ingestion – getting data from multiple sources into a system where it can be processed and analyzed. Without a reliable ingestion mechanism, even the most advanced pipelines can collapse.

This is where GlassFlow Ingest comes in. It provides a simple yet powerful way to collect data from streaming platforms like Apache Kafka and push it into the GlassFlow ecosystem for further processing.

What is GlassFlow Ingest?

GlassFlow Ingest is the entry point of the pipeline. It’s designed to:

- Connect with data streams (commonly Kafka topics).

- Pull events in real time.

- Standardize and prepare them for further steps like Deduplication, Enrichment, and Sink.

Essentially, Ingest makes sure your raw data enters GlassFlow quickly, reliably, and without complexity.

The Problem It Solves

Without a managed ingestion layer, data engineers often face challenges such as:

- High complexity in configuring multiple connectors for different sources.

- Data loss risks when ingestion pipelines aren’t fault tolerant.

- Scaling bottlenecks when input streams increase in volume.

GlassFlow Ingest solves these by offering ready-to-use connectors, reliability, and scalability out of the box.

How GlassFlow Ingest Works

- Connects to Kafka (and other streaming sources) – Currently, Kafka is the primary supported source, making it easy for teams already running Kafka pipelines.

- Pulls events in real time – No need to manually manage offsets or partitions.

- Passes events to NATS inside GlassFlow – Under the hood, Ingest uses NATS for efficient internal event handling, while exposing a Kafka-first interface to users.

- Feeds the pipeline – The ingested data flows seamlessly to Deduplication, Enrichment, and eventually the Sink.

Why Choose GlassFlow Ingest?

- Ease of Use – Minimal configuration compared to setting up custom Kafka consumers.

- Reliability – Built-in fault tolerance ensures no events get lost.

- Scalability – Handles increasing data loads without manual tuning.

- Integration – Works natively with other GlassFlow features, forming a unified pipeline.

Hands-On: Ingesting Kafka Data into ClickHouse with GlassFlow

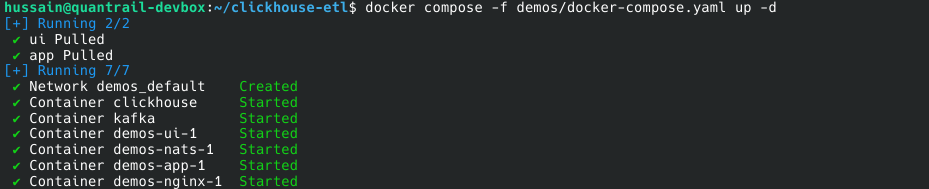

Infrastructure Setup :

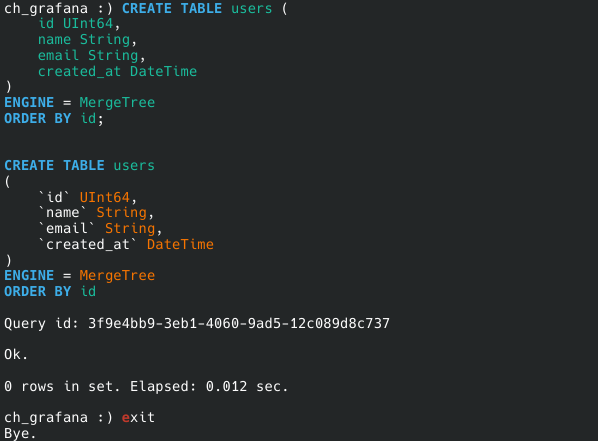

Preparing ClickHouse & Kafka :

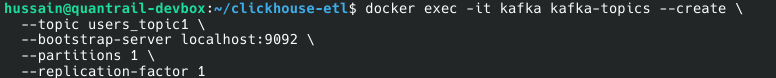

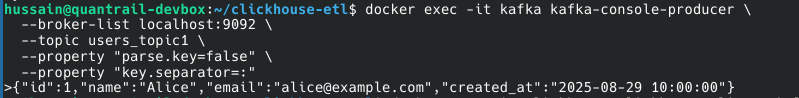

kafka-topic creation and sample produce:

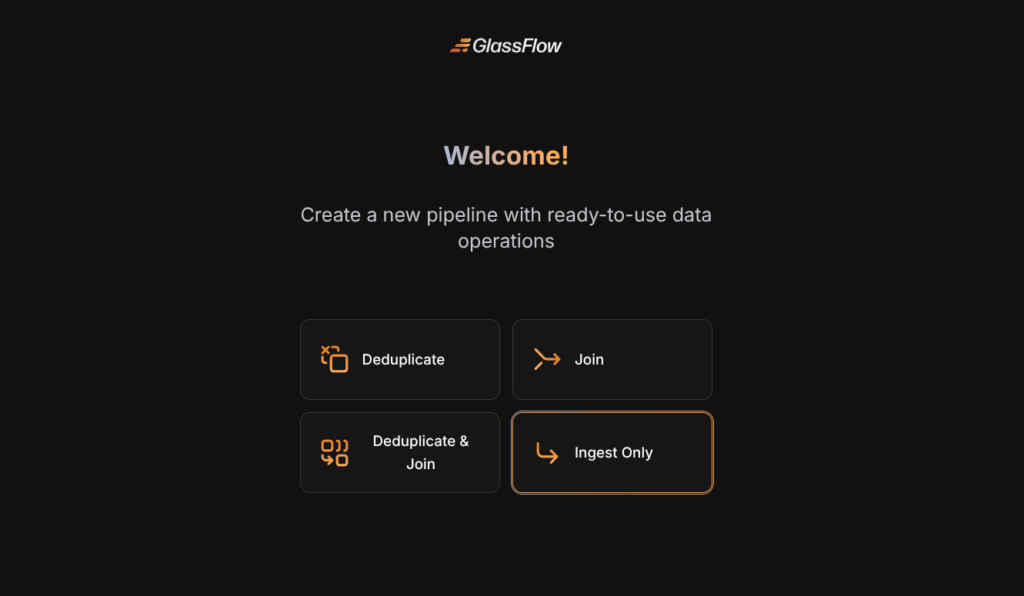

Configuring GlassFlow Ingest:

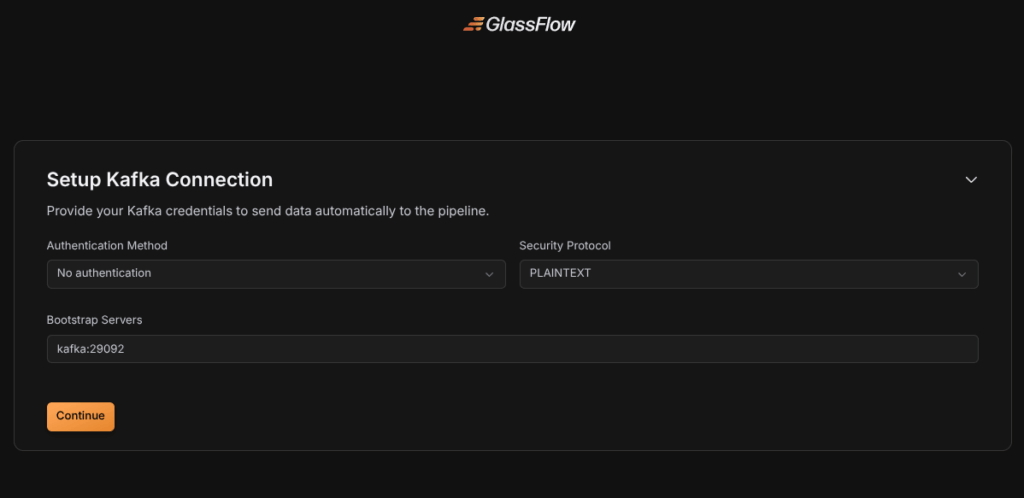

Kafka connection setup:

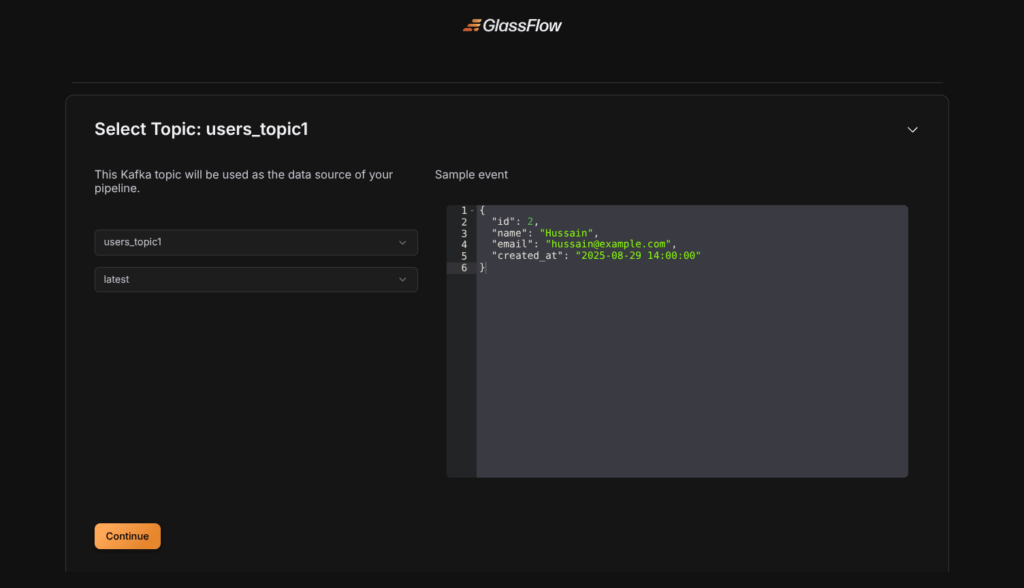

Kafka topic selection:

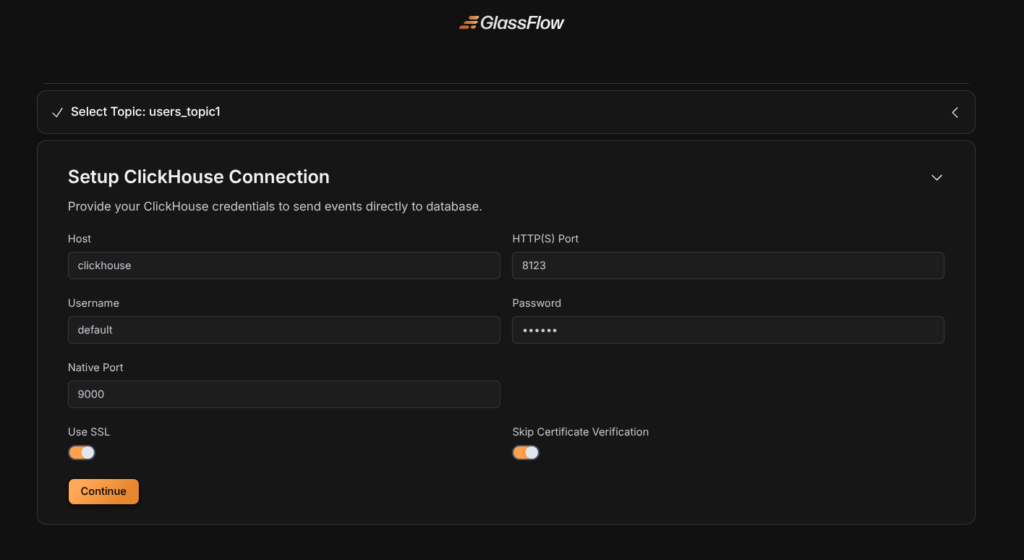

ClickHouse Connection:

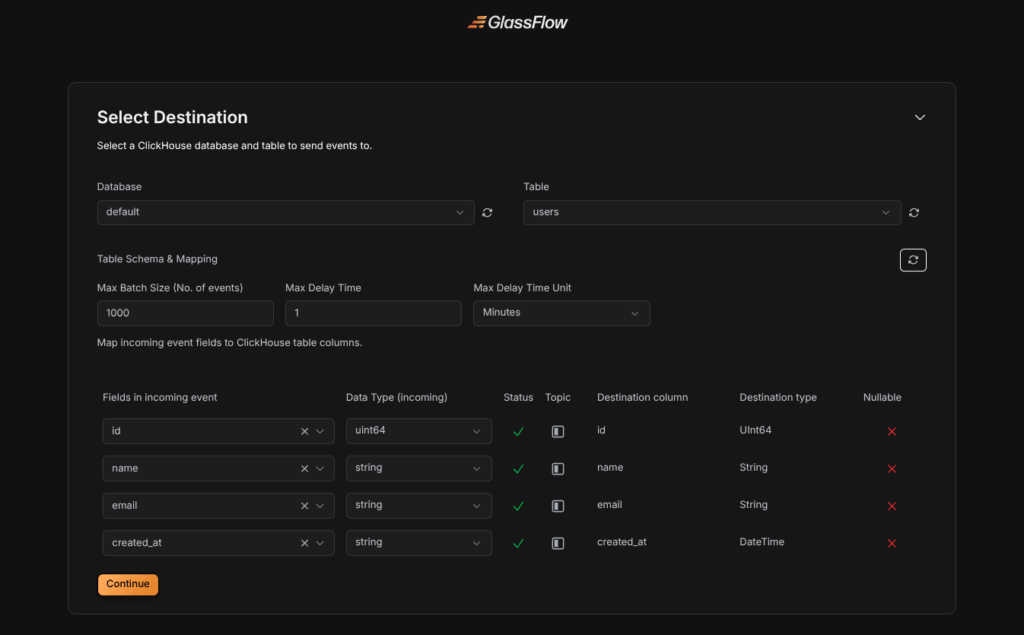

Destination mapping:

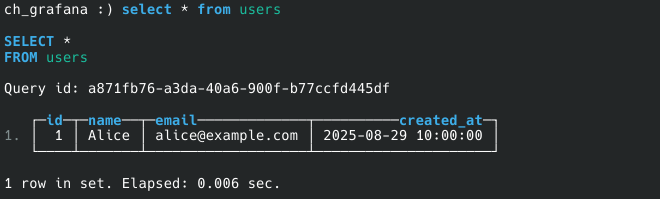

Validating Data:

Real-World Use Cases

- Log Collection – Ingest server logs from Kafka topics for real-time monitoring.

- Clickstream Analysis – Capture website user behavior streams and feed them into analytics.

- IoT Data Streams – Ingest device data from Kafka for real-time dashboards.

Conclusion

GlassFlow Ingest is not just a connector – it’s the foundation of the GlassFlow pipeline. By simplifying the hardest part of real-time data engineering (ingestion), it allows teams to focus more on data quality, enrichment, and insights.

If you’re already using Kafka, GlassFlow Ingest makes it effortless to get your data streaming in and ready for the next steps.