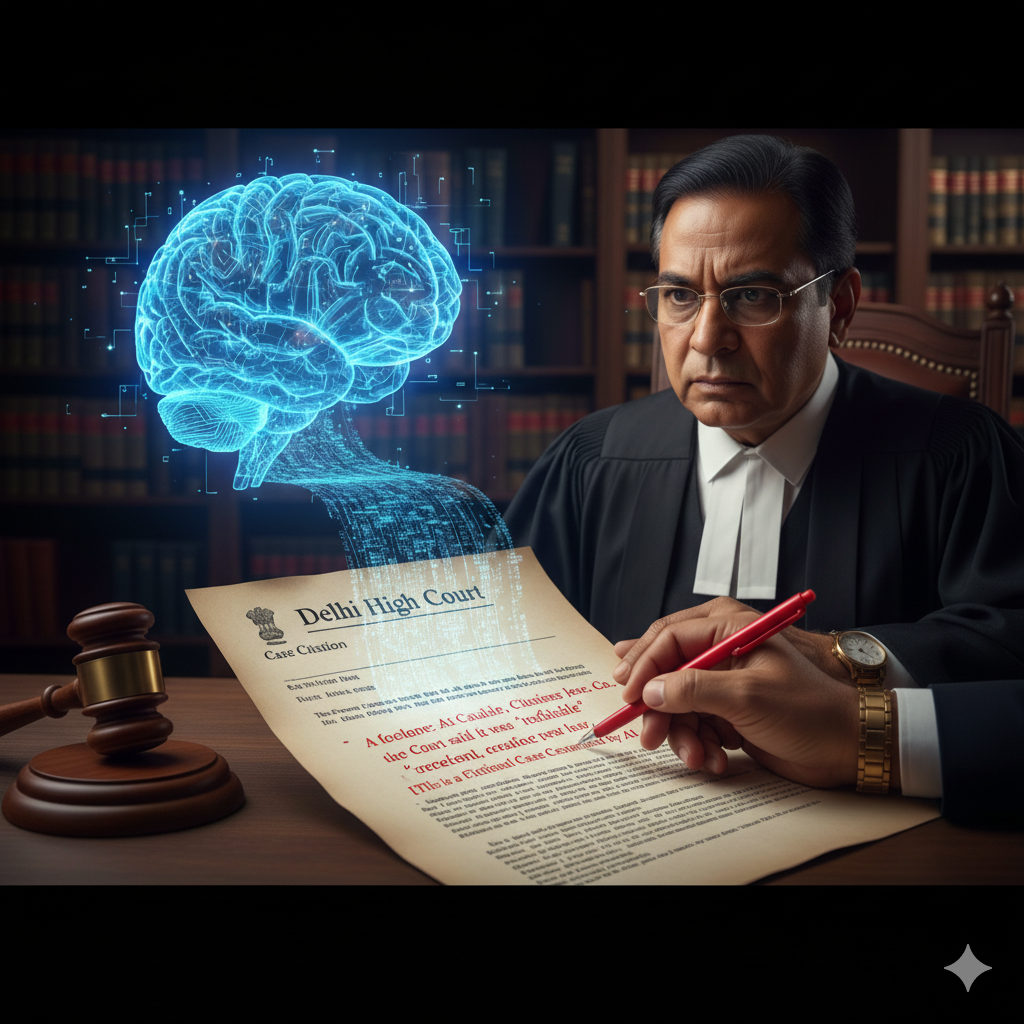

Bogus AI Case Citations in Delhi High Court Raise Red Flags

Artificial Intelligence (AI) is reshaping industries worldwide – from healthcare and education to finance and law. However, its rapid adoption also brings risks. A recent incident in the Delhi High Court has highlighted one of these dangers. A petition submitted in the court contained bogus legal citations generated by AI tools, leading to embarrassment for the petitioner and raising deep concerns about the unchecked use of generative AI in India’s judicial system.

What Happened?

In September 2025, a petitioner filed a case in the Delhi High Court that included references to multiple legal precedents. Upon review, it was discovered that several of these citations were completely fabricated. They had been produced by an AI system but were presented as legitimate case law. When questioned, the petitioner’s lawyers admitted to relying on AI assistance. The case was ultimately withdrawn after the court flagged the errors.

This is not an isolated event. Similar controversies have occurred globally – for example, in the United States, attorneys were fined for submitting ChatGPT-generated citations that turned out to be non-existent. The Delhi case is the first high-profile example in India, and it has sparked a debate about whether AI is ready for integration into sensitive legal processes.

Why This Matters

The case brings several critical issues into focus:

- Credibility of the Legal System: Courts depend on authentic and verifiable case law. Bogus references weaken trust in the judiciary and create confusion.

- Professional Responsibility: Lawyers are legally and ethically obligated to verify all information they present. Blindly trusting AI-generated content without cross-checking can amount to professional misconduct.

- AI Hallucinations: Generative AI tools are known for producing “hallucinations” – false but convincing outputs. While harmless in casual contexts, these errors are dangerous when applied to law, healthcare, or governance.

- Regulatory Gaps in India: Unlike some Western jurisdictions, India does not yet have a comprehensive legal framework governing AI usage in courts or law practice.

What Experts Are Saying

Legal experts have voiced strong opinions on the matter. Justice B.R. Gavai of the Supreme Court has previously warned about the risks of over-reliance on AI in judicial work. According to him, AI should be used for assisting legal research, translation, and streamlining processes, but final judgment and ethical reasoning must remain human responsibilities.

Prominent legal scholars add that AI can be a powerful support tool but should never be treated as an “authority.” Verification using official legal databases and libraries is non-negotiable. Professional bodies, they argue, must issue guidelines on the responsible use of AI in law to prevent further incidents.

Looking Ahead

The Delhi High Court incident should be seen as a wake-up call for India’s legal fraternity. AI is here to stay, but its role in law must be carefully regulated. Training programs for lawyers, strict court protocols, and clear government regulations are urgently needed to avoid similar blunders in the future.

AI is a tool, not a substitute for human expertise. In law, where trust, accuracy, and integrity are paramount, it must be handled with extreme caution. The message is clear: AI should support justice, not distort it.